微调BERT进行中文文本分类任务(Pytorch代码实现)

微调BERT进行中文文本分类任务(Pytorch代码)

BERT(Bidirectional Encoder Representation from Transformers)是2018年10月由Google AI研究院提出的一种预训练模型。其模型结构由多层Transformer的Encoder堆叠而成,在大规模语料中进行预训练后,迁移到下游任务,仅进行参数微调训练,就能显著提升性能。本文聚焦于微调中文BERT进行新闻标题分类任务的代码实现,更多BERT原理参考图解BERT。

读取数据

数据集采样自清华新闻标题分类数据集,10分类且各类别样本均衡,训练集18万,开发集2万,测试集2万。

import pandas as pd

# 本地数据地址

train_data_path = 'data/THUCNewsPart/train.txt'

dev_data_path = 'data/THUCNewsPart/dev.txt'

test_data_path = 'data/THUCNewsPart/test.txt'

label_path = 'data/THUCNewsPart/class.txt'

# 读取数据

train_df = pd.read_csv(train_data_path, sep='\t', header=None)

dev_df = pd.read_csv(dev_data_path, sep='\t', header=None)

test_df = pd.read_csv(test_data_path, sep='\t', header=None)

# 更改列名

new_columns = ['text', 'label']

train_df = train_df.rename(columns=dict(zip(train_df.columns, new_columns)))

dev_df = dev_df.rename(columns=dict(zip(dev_df.columns, new_columns)))

test_df = test_df.rename(columns=dict(zip(test_df.columns, new_columns)))

# 读取标签

real_labels = []

with open('data/THUCNewsPart/class.txt', 'r') as f:

for row in f.readlines():

real_labels.append(row.strip())

分析数据

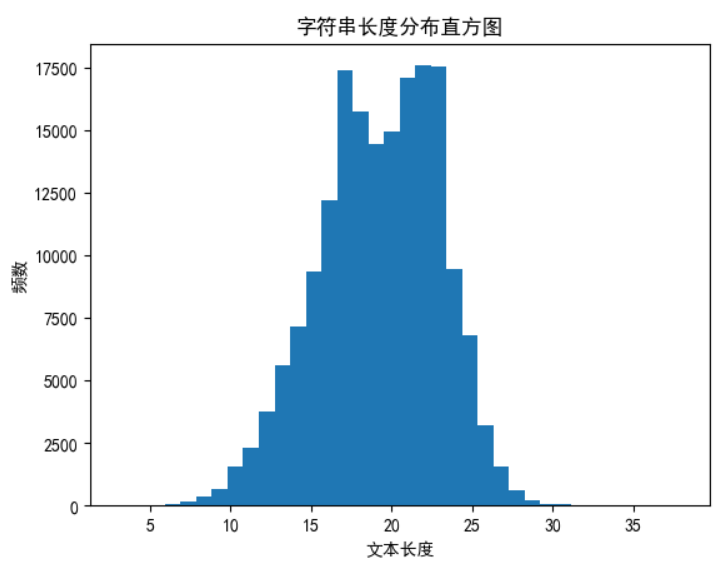

新闻标题的长度在 5~35之间。

import matplotlib.pyplot as plt

# 设置全局字体为SimHei,这是一种支持中文的字体

plt.rcParams['font.sans-serif'] = ['SimHei']

# 解决保存图像时负号'-'显示为方块的问题

plt.rcParams['axes.unicode_minus'] = False

# 计算字符串长度并统计分布

length_counts = train_df['text'].apply(len).value_counts().sort_index()

# 绘制直方图

plt.hist(length_counts.index, bins=len(length_counts), weights=length_counts.values)

plt.xlabel('文本长度')

plt.ylabel('频数')

plt.title('字符串长度分布直方图')

plt.show()

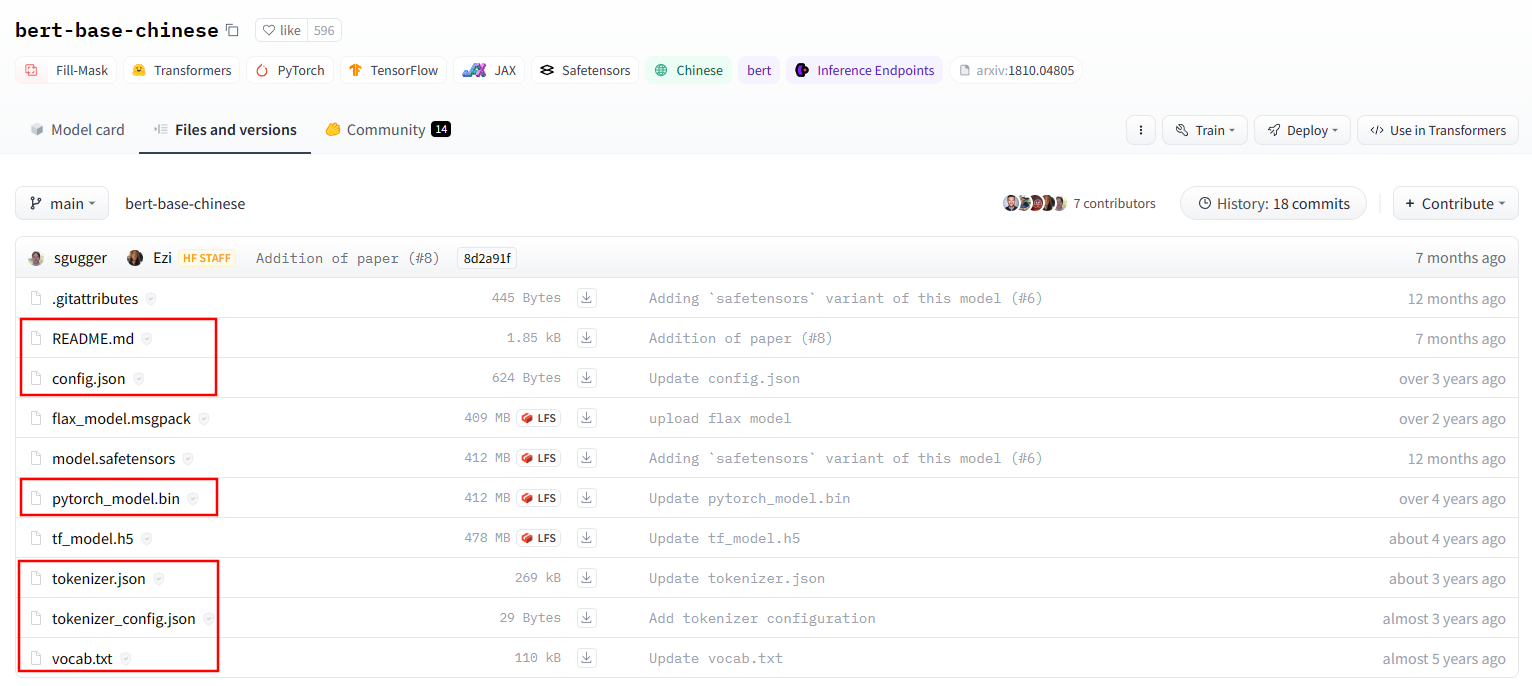

下载预训练模型

前往HuggingFace点击下载预训练模型,并放置到特定文件夹,以便复用。

# 点击下载的方式比较繁杂,奈何国内无法访问,此处记下更优雅的模型下载方式。

# from huggingface_hub import snapshot_download

# snapshot_download(repo_id="bert-base-chinese",

# ignore_patterns=["*.msgpack", "*.h5", "*.safetensors"],

# local_dir="/home/yzw/plm", local_dir_use_symlinks=False)

构建DataSet

调用BERT的分词器,进行分词,其返回结果是包括input_ids,token_type_ids和attention_mask的字典。

# 下载的预训练文件路径

BERT_PATH = '/home/yzw/plm/bert-base-chinese'

from transformers import BertTokenizer

# 加载分词器

tokenizer = BertTokenizer.from_pretrained(BERT_PATH)

example_text = '我爱北京天安门。'

bert_input = tokenizer(example_text,padding='max_length',

max_length = 10,

truncation=True,

return_tensors="pt") # pt表示返回tensor

print(bert_input)

# {'input_ids': tensor([[ 101, 2769, 4263, 1266, 776, 1921, 2128, 7305, 511, 102]]), 'token_type_ids': tensor([[0, 0, 0, 0, 0, 0, 0, 0, 0, 0]]), 'attention_mask': tensor([[1, 1, 1, 1, 1, 1, 1, 1, 1, 1]])}

继承Dataset,并实现__init__、__getitem__以及__len__方法,以便进行迭代训练。

from torch.utils.data import Dataset, DataLoader

class MyDataset(Dataset):

def __init__(self, df):

# tokenizer分词后可以被自动汇聚

self.texts = [tokenizer(text,

padding='max_length', # 填充到最大长度

max_length = 35, # 经过数据分析,最大长度为35

truncation=True,

return_tensors="pt")

for text in df['text']]

# Dataset会自动返回Tensor

self.labels = [label for label in df['label']]

def __getitem__(self, idx):

return self.texts[idx], self.labels[idx]

def __len__(self):

return len(self.labels)

# 因为要进行分词,此段运行较久,约40s

train_dataset = MyDataset(train_df)

dev_dataset = MyDataset(dev_df)

test_dataset = MyDataset(test_df)

构建模型

模型结构比较简单,取BERT的[CLS]输出(pooled_output),经过dropout层随机丢弃一些神经元,再接入线性分类层,最后采用ReLU进行激活,得到分类结果。后面将讨论RuLU的有效性。

from torch import nn

from transformers import BertModel

class BertClassifier(nn.Module):

def __init__(self):

super(BertClassifier, self).__init__()

self.bert = BertModel.from_pretrained(BERT_PATH)

self.dropout = nn.Dropout(0.5)

self.linear = nn.Linear(768, 10)

self.relu = nn.ReLU()

def forward(self, input_id, mask):

_, pooled_output = self.bert(input_ids=input_id, attention_mask=mask, return_dict=False)

dropout_output = self.dropout(pooled_output)

linear_output = self.linear(dropout_output)

final_layer = self.relu(linear_output)

return final_layer

训练模型

import torch

from torch.optim import Adam

from tqdm import tqdm

import numpy as np

import random

import os

# 训练超参数

epoch = 5

batch_size = 64

lr = 1e-5

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

random_seed = 1999

save_path = './bert_checkpoint'

def setup_seed(seed):

torch.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

np.random.seed(seed)

random.seed(seed)

torch.backends.cudnn.deterministic = True

setup_seed(random_seed)

def save_model(save_name):

if not os.path.exists(save_path):

os.makedirs(save_path)

torch.save(model.state_dict(), os.path.join(save_path, save_name))

# 定义模型

model = BertClassifier()

# 定义损失函数和优化器

criterion = nn.CrossEntropyLoss()

optimizer = Adam(model.parameters(), lr=lr)

model = model.to(device)

criterion = criterion.to(device)

# 构建数据加载器

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

dev_loader = DataLoader(dev_dataset, batch_size=batch_size)

# 训练

best_dev_acc = 0

for epoch_num in range(epoch):

total_acc_train = 0

total_loss_train = 0

for inputs, labels in tqdm(train_loader):

input_ids = inputs['input_ids'].squeeze(1).to(device) # Shape: [batch_size, seq_length]

masks = inputs['attention_mask'].squeeze(1).to(device) # Shape: [batch_size, seq_length]

labels = labels.to(device)

output = model(input_ids, masks)

batch_loss = criterion(output, labels)

batch_loss.backward()

optimizer.step()

optimizer.zero_grad()

acc = (output.argmax(dim=1) == labels).sum().item()

total_acc_train += acc

total_loss_train += batch_loss.item()

# ----------- 验证模型 -----------

model.eval()

total_acc_val = 0

total_loss_val = 0

with torch.no_grad():

for inputs, labels in dev_loader:

input_ids = inputs['input_ids'].squeeze(1).to(device) # Shapeustain: [batch_size, seq_length]

masks = inputs['attention_mask'].squeeze(1).to(device) # Shape: [batch_size, seq_length]

labels = labels.to(device)

output = model(input_ids, masks)

batch_loss = criterion(output, labels)

acc = (output.argmax(dim=1) == labels).sum().item()

total_acc_val += acc

total_loss_val += batch_loss.item()

print(f'''Epochs: {epoch_num + 1}

| Train Loss: {total_loss_train / len(train_dataset): .3f}

| Train Accuracy: {total_acc_train / len(train_dataset): .3f}

| Val Loss: {total_loss_val / len(dev_dataset): .3f}

| Val Accuracy: {total_acc_val / len(dev_dataset): .3f}''')

# 保存最优的模型

if total_acc_val / len(dev_dataset) > best_dev_acc:

best_dev_acc = total_acc_val / len(dev_dataset)

save_model('best.pt')

model.train()

# 保存最后的模型和优化器

save_model('last.pt')

torch.save(optimizer.state_dict(), os.path.join(save_path, 'optimizer.pt'))

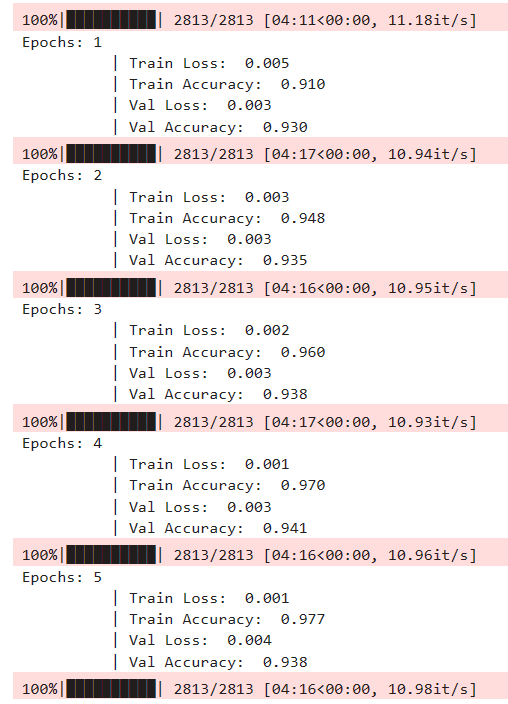

batch_size设置为64,占用显存约4GB。迭代训练5epoch,每个epoch耗时4分钟,第4个epoch后开发集准确率出现下降,训练过程如下图所示:

评估模型

import torch

from torch.utils.data import DataLoader

def evaluate(model, dataset, device, batch_size=128):

model.eval()

test_loader = DataLoader(dataset, batch_size=batch_size)

total_acc_test = 0

total_loss_test = 0

criterion = torch.nn.CrossEntropyLoss().to(device)

try:

with torch.no_grad():

for test_input, test_label in test_loader:

input_id = test_input['input_ids'].squeeze(1).to(device) # Shape: [batch_size, seq_length]

mask = test_input['attention_mask'].squeeze(1).to(device) # Shape: [batch_size, seq_length]

test_label = test_label.to(device)

output = model(input_id, mask)

batch_loss = criterion(output, test_label)

acc = (output.argmax(dim=1) == test_label).sum().item()

total_acc_test += acc

total_loss_test += batch_loss.item()

test_accuracy = total_acc_test / len(dataset)

test_loss = total_loss_test / len(dataset)

print(f'Test Accuracy: {test_accuracy:.3f} | Test Loss: {test_loss:.3f}')

return test_accuracy, test_loss

except Exception as e:

print(f"Error during evaluation: {str(e)}")

raise

# 加载模型

model = BertClassifier()

save_path = './bert_checkpoint'

model.load_state_dict(torch.load(os.path.join(save_path, 'best.pt')))

model = model.to(device)

model.eval()

# 评估模型

evaluate(model, test_dataset, device)

开发集上表现最好的模型,在测试集上的准确率为0.946。

交互式推理

import torch

def predict_news_title(model, tokenizer, real_labels, device):

model.eval()

while True:

try:

text = input('新闻标题 (输入 "exit" 退出):')

if text.lower() == 'exit':

print("退出预测")

break

bert_input = tokenizer(text,

padding='max_length',

max_length=35,

truncation=True,

return_tensors="pt")

input_ids = bert_input['input_ids'].to(device) # Shape: [1, seq_length]

masks = bert_input['attention_mask'].to(device) # Shape: [1, seq_length]

with torch.no_grad():

output = model(input_ids, masks)

pred = output.argmax(dim=1).item()

print(f'预测分类: {real_labels[pred]}')

except Exception as e:

print(f"预测错误: {str(e)}")

continue

# 示例调用 (假设 model, tokenizer, real_labels, device 已定义)

predict_news_title(model, tokenizer, real_labels, device)

# 运行结果

新闻标题: 男子炫耀市中心养烈性犬?警方介入

society

新闻标题: 北京大学召开校运动会

society

新闻标题: 深度学习大会在北京召开

science

讨论分析

-

是否需要在分类线性层后加上ReLU激活函数?

ReLU激活函数为模型提供非线性能力,但BERT中已经包含了大量非线性激活函数,感觉没有必要再添加。分类线性层紧接着损失函数计算,进行ReLU激活,改变了输出概率分布,原先负数强制归零,会不会影响到模型的学习能力?

实验表明:不加ReLU训练速度基本不变,但训练3个epoch后开发集指标出现下降,最终验证集准确率0.944,小幅提升。确实没有在分类线性层后加上ReLU激活函数的必要。