Making Sense of AI: From LLMs to Agents and Beyond

Keeping up with AI today is exhausting.

New ideas show up every week — from large language models to agents and increasingly complex systems — and it’s hard to tell how they all fit together. If it feels confusing, it’s not because you’re behind. It’s because the concepts are evolving faster than most explanations.

- Why do chatbots feel powerful, yet limited?

- Why is there so much excitement around agents?

- And what actually changes when AI starts to do things, not just talk? — and where is all of this heading?

This article is an attempt to slow things down — and make sense of how these ideas connect, what problems they’re solving, and where this direction is leading.

LLMs Are the Brain — But Brains Alone Don’t Act

Most people today are familiar with Large Language Models (LLMs) like ChatGPT or Gemini. They are remarkable at reasoning, summarizing, and conversation. They think in language.

But there is an important limitation: on their own, LLMs don’t actually do anything.

They don’t run scripts, click buttons, modify files, or change the state of a system. Without external tools, an LLM can describe actions perfectly — while leaving the real world untouched.

This gap between understanding and execution is exactly why Agents exist.

Agents: When Language Models Get Hands

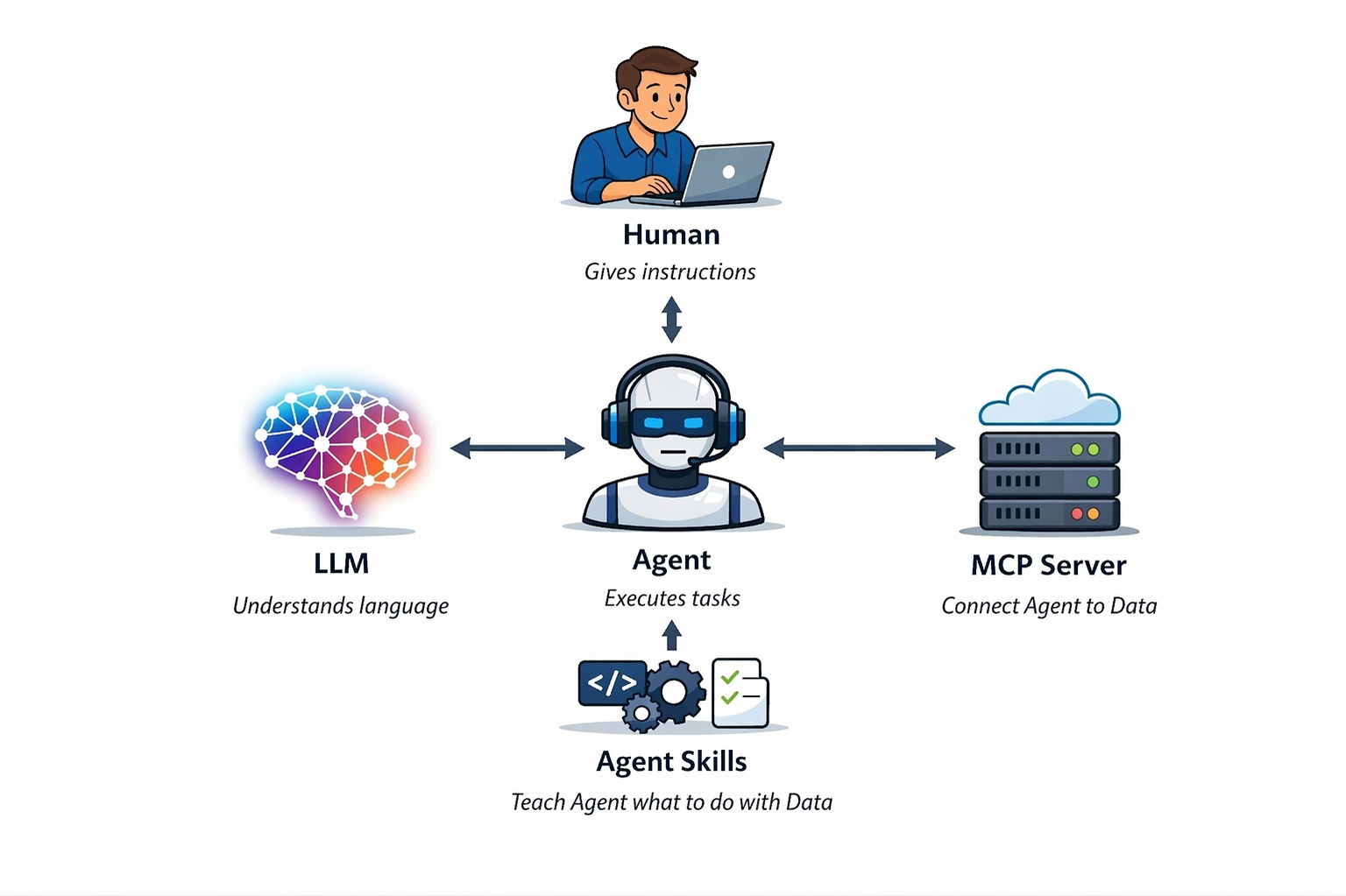

An Agent can be understood as an LLM equipped with tools and a control loop.

Those tools might include APIs, file systems, browsers, scripts, or operating‑system actions. Once tools are involved, the system moves beyond explanation and into execution.

We already see this pattern in different environments:

- Systems like IBM Bob operate through a GUI‑based IDE

- Claude Code lives inside a developer’s terminal

Both can be viewed as agent‑style systems — designed primarily for technical users.

What makes newer platforms like OpenClaw interesting isn’t just the technology, but who they are built for.

For many non‑developers, OpenClaw is the first time they’ve seen AI actually carry out real tasks. Instead of chatting with a model, users experience an agent acting on their behalf.

Imagine sending a WhatsApp message to a “Clawbot” while you’re away from home — and that bot, running on your personal computer, receives the instruction and executes tasks: organizing files, running scripts, or preparing outputs.

This shift — from passive language models to systems that can act, often through coordinated agents — is commonly described as “agentic AI.”

Teaching Agents: MCP vs. Agent Skills

Once agents exist, the next challenge is obvious:

How do we teach them what to do?

Two common approaches have emerged.

MCP: Connecting Agents to Data

Model Context Protocol (MCP) focuses on connecting agents to external systems such as GitHub or Jira.

Configuration is often simple — sometimes just a couple of parameters — assuming the service exposes an MCP endpoint. Once connected, the agent can access the data directly.

The trade‑off is that MCP tends to eagerly load information into the model’s context. This can quickly increase token usage — which directly impacts cost — and introduce irrelevant noise.

Agent Skills: Teaching Agents How to Use Data

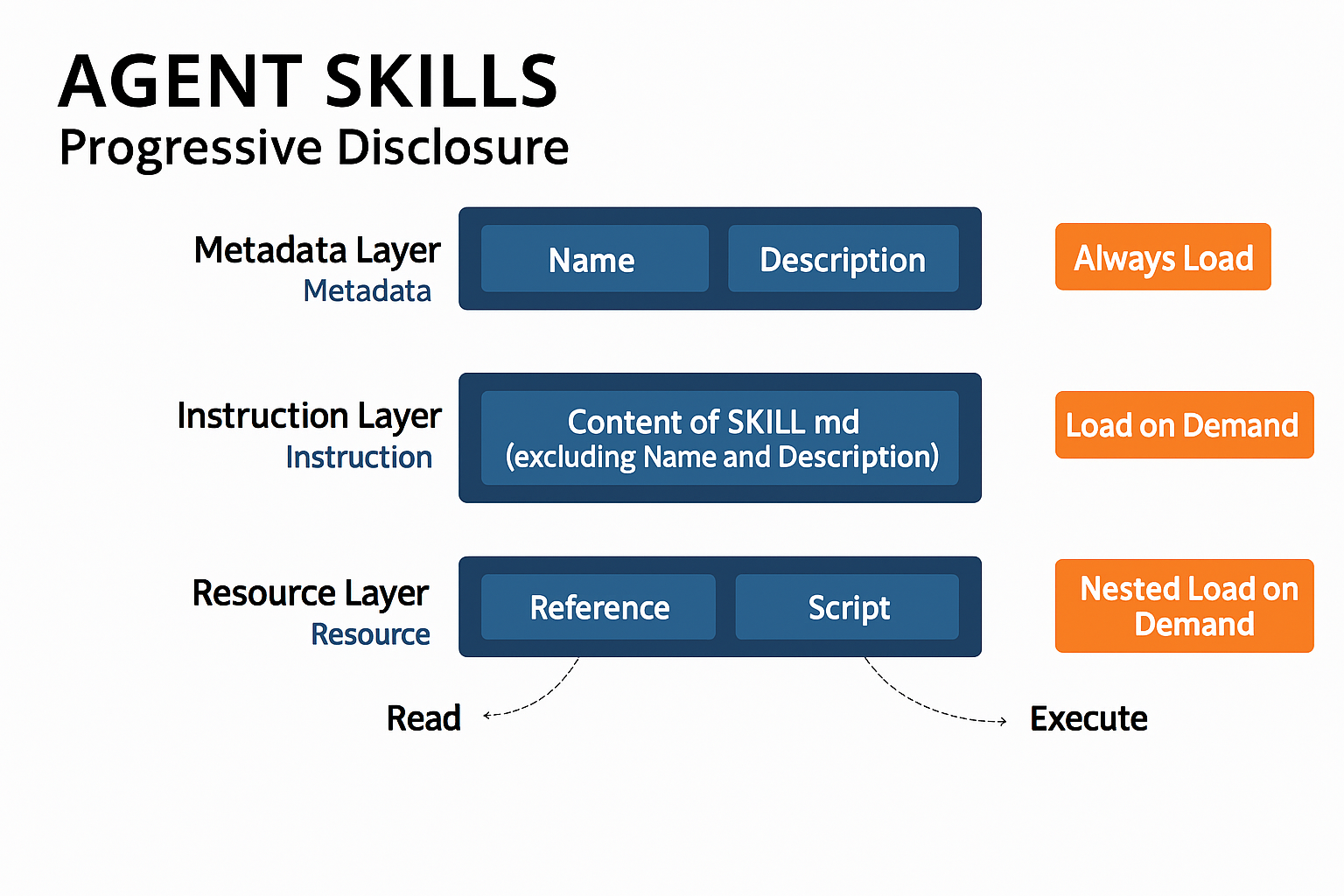

Agent Skills take a different approach.

Rather than loading everything upfront, they rely on Progressive Disclosure and Load on Demand:

- Only load what is needed

- Only when it is needed

Consider a Teams meeting. After the call, a full transcript is generated. That transcript is data — but most tasks don’t require reading every word.

A Skill can extract just the action items without flooding the LLM’s limited context window with the entire conversation. Details like side discussions or filler words are processed only if there is a functional reason to do so.

The result is a system that stays focused, avoids unnecessary noise, and uses tokens more efficiently.

In simple terms:

In simple terms:

- MCP connects agents to data

- Agent Skills teach agents what to do with data

Determinism vs. Intelligence

LLMs are inherently non‑deterministic. Ask the same question twice, and you may get different answers.

Agents, tools, MCP servers, and Skills are different. They are:

- Designed by humans

- Constrained by rules

- More predictable and auditable

Looking ahead, it’s reasonable to imagine three primary actors: Humans, LLMs, and increasingly capable “Super Agents.”

Many of today’s helpers — MCPs, Skills, and orchestration layers — are transitional layers that will eventually be absorbed into more integrated agent systems.

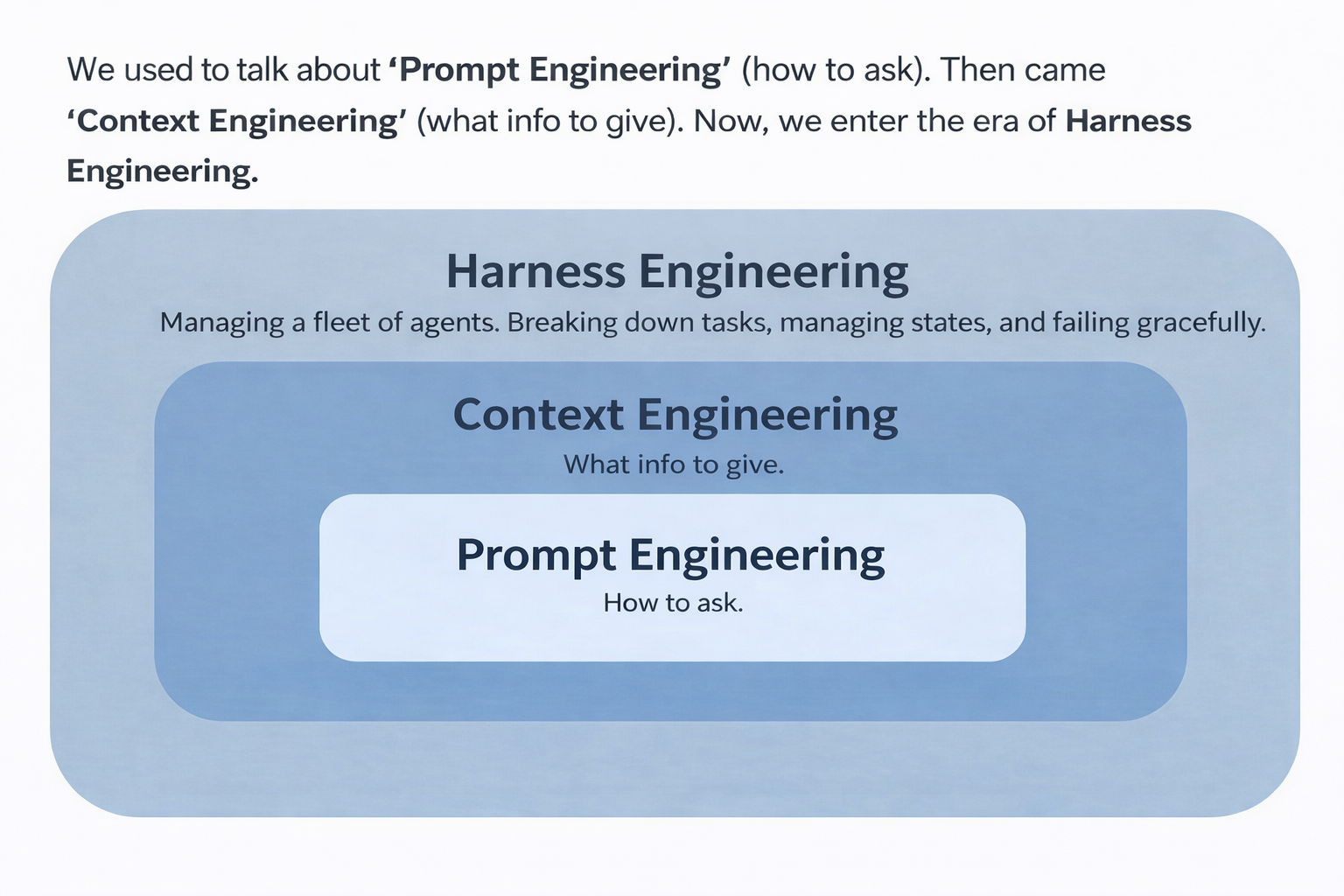

From Prompt Engineering to Harness Engineering

The industry’s focus has evolved in stages:

- Prompt Engineering — how to ask better questions

- Context Engineering — what information to provide

- Harness Engineering — how to manage agent systems

Harness Engineering is about orchestration:

- Breaking tasks into steps

- Managing state and constraints

- Validating critical actions

- Recovering from failures

- Coordinating agent lifecycles, approvals, and handoffs

This shift reflects a broader trend. LLM capabilities are maturing, and continued investment in larger models shows diminishing marginal returns. As a result, innovation is moving up the stack — toward agent systems and applications.

Rules, Verification, and Product Thinking

Agents need rules — but more rules don’t necessarily mean better behavior.

Overloading a rule file (for example, agent.md) often makes agents worse. When everything is emphasized, nothing is.

A more effective strategy is iterative:

- When an agent makes a mistake, add one precise guardrail

- Remove outdated rules as behavior improves

One rule stands out as universal:

An agent should never verify its own work.

A coding agent will almost always believe its output is correct. Verification must be handled by a separate inspector agent.

As agent systems grow, engineers who enjoy isolated problem‑solving may find the transition challenging. Those with a strong product mindset — who can break tasks down, set constraints, and audit results — often adapt more easily.

Work shifts from writing in isolation to orchestrating intelligent labor.

Harnesses Are Powerful — and Temporary

Harness Engineering delivers real value, but it is not a permanent moat.

Harnesses exist to compensate for current limitations. As AI capabilities improve, some layers will disappear — only to be replaced by new ones addressing new gaps.

This is an ongoing arms race, not a one‑time breakthrough.

No GUI, Just APIs

Graphical interfaces exist for humans. Agents don’t need buttons.

In an agent‑centric future, applications without APIs effectively don’t exist. What emerges next resembles an Agent OS:

- Fewer keyboards

- Minimal screens

- Interaction through dialogue, intent, and visual feedback

- Input via touch, gaze, or other natural signals

Why LLMs Alone Won’t Get Us to AGI

Despite rapid progress, LLMs alone are unlikely to bring us to Artificial General Intelligence.

The Moravec Paradox explains why: tasks humans find effortless (perception, movement, physical intuition) are extremely hard for AI, while abstract reasoning is comparatively easy.

LLMs summarize — they don’t truly predict.

Once, I asked a model whether the USD/CAD exchange rate would rise. It said yes, citing convincing sources. A few days later, I asked if it would fall — and it again said yes, with different sources.

LLMs reflect information; they don’t possess a mental model of the world.

True AGI likely requires World Models — systems that can represent reality, predict future states, and plan actions accordingly.

It’s also worth clarifying a common misconception: robots that can do spectacular physical feats — like kung fu or backflips — are not AGI. They excel at narrow tasks, not general intelligence.

Conclusion: Lead the Agents, Don’t Become One

AI is accelerating learning and collapsing the distance between intent and execution.

Our role is changing — from executors to orchestrators, from task‑doers to direction‑setters.

Don’t just learn how to use AI. Learn how to lead it.

Let’s build a better future — one agent at a time.